by Harold Burt-Gerrans

Welcome to Part 5. As promised in Part 4, I’ll start by discussing recursive de-duplication.

Recursive De-Duplication: Using Aliases Within De-Duplication

I can’t count the number of times that clients have complained about x.400/x.500 addresses in emails. Unfortunately, if the collected data comes with those address structures and not fred@xyz.com, we’re stuck with using them. Relativity and Ringtail have both introduced “People” or “Entity” type data structures (I’m sure other review tools have as well), but I think these structures are not used to their full potential yet.

Part of the de-duplication process should be to recursively substitute alias values in place of address strings so that multiple copies of the same email can be matched even when one copy has addresses formatted differently from the other copies. This does cause some grief when the use of aliases at a later date determines that two messages already promoted into the review stage are actually duplicates, especially if they have not been coded consistently. Without giving away every idea floating around in my noggin, I’ll just say that there are sound logical methods to deal with these issues if you prepare for them ahead of time.

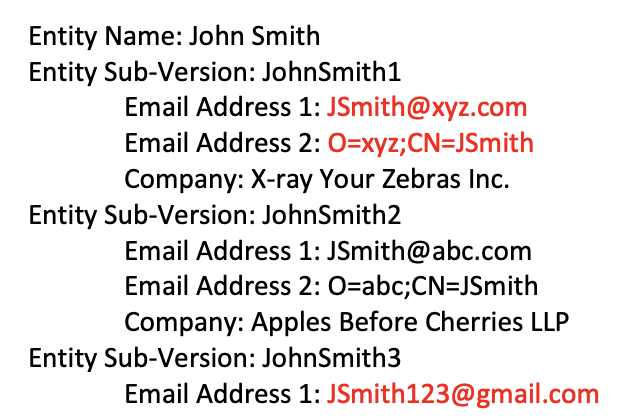

In conjunction with the introduction of aliases into the processing/de-duplication phases, consideration also needs to be given within the address structure to identify what I will call “Versions” of entities. For example, John Smith may have email addresses “JSmith@xyz.com”, “O=xyz;CN=JSmith”, and “JSmith123@gmail.com”. It should be maintained within the entity structure that the Gmail version of John Smith is not the same version John Smith indicated by the other two addresses (which are aliases of each other), as each version has its own set of metadata. Additionally, JSmith could be important to the matter and because he is working two jobs, he has email addresses for the separate jobs. Perhaps the Entity structure should be something like:

A structure similar to the above allows for the grouping of everything linked to John Smith, yet still differentiates between the different ways John Smith is known.

With every ingestion of data into the processing/review system, the Alias tables can be updated (likely manually) and the recursive de-duplication process should be run, substituting the primary email string for secondary email strings for each sub-entity. By using the above structures to update the deduplication, we can now identify that an email from O=xyz;CN=JSmith to fred@abc.com is the same message as another email that is from JSmith@xyz.com to O=abc;CN=Fred and the same as a third email from JSmith@xyz.com to fred@abc.com.

Time Zones

First, a fun fact: the earth rotates once every 23 hours, 56 minutes and 4 seconds, not every 24 hours. However, because of the earth’s path around the sun, it takes about 24 hours for a point on the earth to rotate back around to the same position in relation to the sun. Hence, a day = 24 hours. Coincidentally, the difference between 23:56:04 and 24:00:00 divided by 23:56:04 is approximately equal to 1 divided by 365, number of days in a year (not really a coincidence, mathematically it makes sense that it would be).

I don’t have the energy, or the interest, to investigate and detail the history of clocks, why the day is in 24 hours of 60 minutes of 60 seconds, or why new days start at midnight instead of sunrise. Regardless, since the beginning of time (Did time actually begin? Doesn’t 1 second before the beginning of time imply that it had already begun?), people have tracked time based on the sun. Long ago, sun-based devices, such as sundials, were invented to quantify units of time based on the movements of the sun. Somewhere along the way, people standardized on sunrises being around 7:00am, working hours of 9:00am to 5:00pm etc., but these were typically locally set times. As things began to be connected across long distances (e.g. by railroads) it became a requirement that the local times were co-ordinated, and eventually, international meetings established Time Zones synchronized to Greenwich Mean Time for worldwide time standardization. As a Canadian, I’m proud to remind everyone that a key player in this was Sir Sandford Fleming, a Scottish Canadian.

While Sandford made it easier for us to schedule things – If it is 1:00am, it is the middle of the night wherever you happen to be, 12:00pm in Ontario is 9:00am on the west coast of North America, etc. – we basically can predict what is happening elsewhere based on adjusting for the time zone. Since it’s midnight in Toronto, it’s 3:00pm in Sydney and people there must be at work, since I would be at work at 3:00pm (Oddly though, some of my western colleagues still think 2:30pm for them is a good time to start a meeting with me).

In eDiscovery, we typically ask what time zone to use for processing data. For regional matters, it’s almost always the time zone of the region. But, for national or international matters, it’s important to take into consideration how the data is used as well as displayed. For example, emails typically store the time in the native file in either UTC or local time with a UTC offset. But the output from processing is typically just a date and time, without UTC reference.

Consequently, if you process the emails from two custodians within their differing time zones, the extracted email dates will show the times as they related to the custodians and will not necessarily be in correct sequence for email threading. Hence, for consistency, it is usually best to process everything for a matter against a specific time zone – perhaps the time zone of the Judge is the best choice. What should be added to the processing environments and carried into the review platform are two or three dates/times:

- Date/time in the time zone of the matter (as the primary date/time for reference)

- Date/time in the time zone of the custodian (for local reference)

- Date/time in the time zone of the review team. This one is optional as often it is the same as one of the others. It is only useful to co-ordinate times / events from the reviewer’s prospective if they are in a completely different time zone.

A flexible method to do this would be to store all the dates in UTC within the review platform and allow a setting which forces their display as either static in a specific time zone or dynamic in the time zone of the person viewing the document.

One other thing to consider when reviewing emails is that the content of a thread may not be from consistent time zones. The date stamp on the most recent internal thread message is put there by the sender, so it is relative to who is sending the message out. In the example below, we processed John’s mailbox, in Ontario.

The interpretation of the timing of the above events changes significantly if:

a) Sara is in the same city as John and is interested in purchasing from John’s company. In this case, John appears to be a little slow at customer service. OR

b) John met Sara, who is in China, on InternationalDating.com. In this case, John replied in 1 minute, not 12 hours. Need I say more….

Just as a small rant… All the time zone problems in eDiscovery would go away if the world standardized on a single time zone. I’m sure I could adapt to a working 3pm – 11pm if my sunrise was at 2pm. And switching to working 2pm – 10pm when it would be Daylight Savings Time. I’m adaptable. I survived Y2K and the switch from Imperial to Canadian Metric.

I thought I might squeeze all my stuff into 5 parts, but it’s surprisingly easy to blow through 1,000 words. I have a little bit more left for Part 6, which should be the last one of the series and presents everything tied into my version of eUtopia.

Read part six here.

About The Author

Harold Burt-Gerrans is Director, eDiscovery and Computer Forensics for Epiq Canada. He has over 15 years’ experience in the industry, mostly with H&A eDiscovery (acquired by Epiq, April 1, 2019).